Training curves for the bigLSTM English language model shows the benefits of the mixed-precision training techniques. NVIDIA GPUs offer up to 8x more half precision arithmetic throughput when compared to single-precision, thus speeding up math-limited layers.įigure 1.

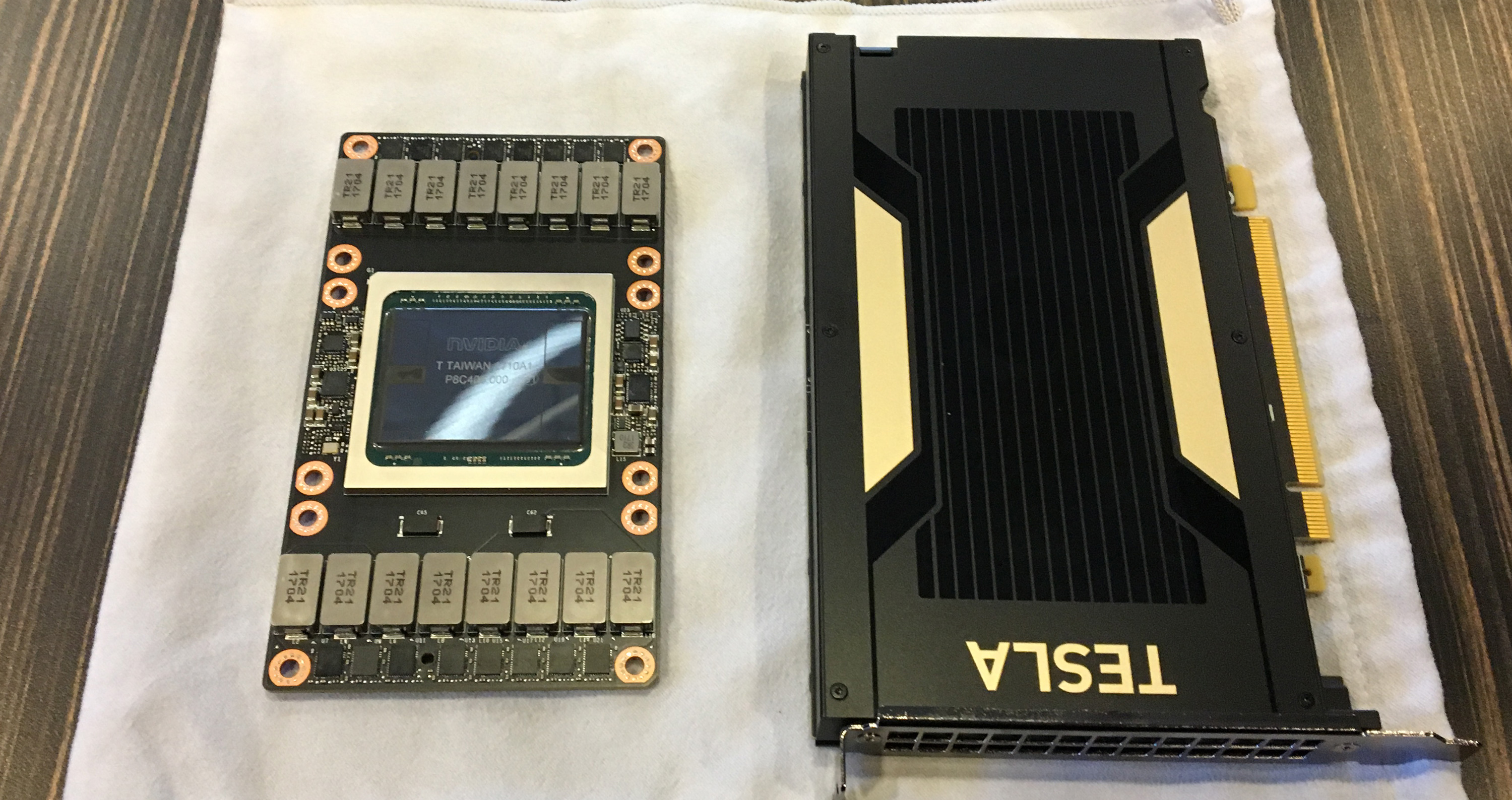

Half-precision halves the number of bytes accessed, thus reducing the time spent in memory-limited layers. Lowering the required memory enables training of larger models or training with larger mini-batches.Įxecution time can be sensitive to memory or arithmetic bandwidth. Half-precision floating point format (FP16) uses 16 bits, compared to 32 bits for single precision (FP32). One way to lower the required resources is to use lower-precision arithmetic, which has the following benefits. Single precision (also known as 32-bit) is a common floating point format ( float in C-derived programming languages), and 64-bit, known as double precision ( double).ĭeep Neural Networks (DNNs) have led to breakthroughs in a number of areas, including:ĭNN complexity has been increasing to achieve these results, which in turn has increased the computational resources required to train these networks. Half precision (also known as FP16) data compared to higher precision FP32 vs FP64 reduces memory usage of the neural network, allowing training and deployment of larger networks, and FP16 data transfers take less time than FP32 or FP64 transfers. Mixed precision is the combined use of different numerical precisions in a computational method. The ability to train deep learning networks with lower precision was introduced in the Pascal architecture and first supported in CUDA 8 in the NVIDIA Deep Learning SDK. Adding loss scaling to preserve small gradient values.Porting the model to use the FP16 data type where appropriate.Using mixed precision training requires two steps: Since the introduction of Tensor Cores in the Volta and Turing architectures, significant training speedups are experienced by switching to mixed precision - up to 3x overall speedup on the most arithmetically intense model architectures. Most deep learning and machine learning related work involves single precision so K40 or K80 is not suitable compared Titan X.Mixed precision training offers significant computational speedup by performing operations in half-precision format, while storing minimal information in single-precision to retain as much information as possible in critical parts of the network. If your computation requires double precision (FP64) then you need to take a look at Tesla chips like K40 which are almost twice as expensive and with same memory as Titan X.

In fact, NVidia Digits Devbox features Titan X as well. This is especially true for deep learning related work. So you can actually build much powerful system using two Titan X instead of one Titan Z with approximately the same cost. I would suggest Titan X is probably better choice than either Titan Z or Titan Black due to two reasons: (1) it costs only $1100 (2) it has twice the memory. The Titan Black itself is designed for mainly single precision units, limited double precision units and relatively low end memory. Main advantage of Titan Z is that two chips are synchronized so load will be distributed evenly and also that it costs ~$2100 vs cost of $1700 for single Titan Black. Titan Z is in essence two Titan Black chips on same card with individual 6GB VRAM. personally, I find memory more often limiting than fp precision though) (edit: I see titan z unlike previous gaming cards has full double precision too so if you do need double precision, that adds to its value. But if you are sure you dont need either of those (like I do for my next build), a pair of Titan Z's is a pretty good way to cram an insane amount of single precision performance into a manageable form factor. So the K40 has more memory per chip, and more double precision performance. Of course most applictions, including scientific simulations, are not actually constrained by the limits of single precision floats but for some applications it does matter. First of all, if you absolutely do need double precision, then they are a bad deal. I have worked with titans and other NVidia gaming cards for scientific computing extensively over the last years, and they work just fine for my purposes, but as always, 'it depends'. Its got two chips undoubtly it will act as two separate cards, from a compute perspective, like all other cards of this kind before it.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed